AI Isn't a Software Business Anymore

AI Market Report March 2026: Google’s price war, America’s shadow-banking energy play, and why the whole AI stack just fractured into four industries — each with different economics.

It takes time to create work that’s clear, independent, and genuinely useful. If you’ve found value in this newsletter, consider becoming a paid subscriber. It helps me dive deeper into research, reach more people, stay free from ads/hidden agendas, and supports my crippling chocolate milk addiction. We run on a “pay what you can” model—so if you believe in the mission, there’s likely a plan that fits (over here).

Every subscription helps me stay independent, avoid clickbait, and focus on depth over noise, and I deeply appreciate everyone who chooses to support our cult.

PS – Supporting this work doesn’t have to come out of your pocket. If you read this as part of your professional development, you can use this email template to request reimbursement for your subscription.

Every month, the Chocolate Milk Cult reaches over a million Builders, Investors, Policy Makers, Leaders, and more. If you’d like to meet other members of our community, please fill out this contact form here (I will never sell your data nor will I make intros w/o your explicit permission)- https://forms.gle/Pi1pGLuS1FmzXoLr6

Every month, I break down the most important developments in AI. Usually, they cluster around a theme — a new model race, a pricing shift, a regulatory wave. March was different. March was the month where AI stopped behaving like a software industry.

Let me explain what I mean.

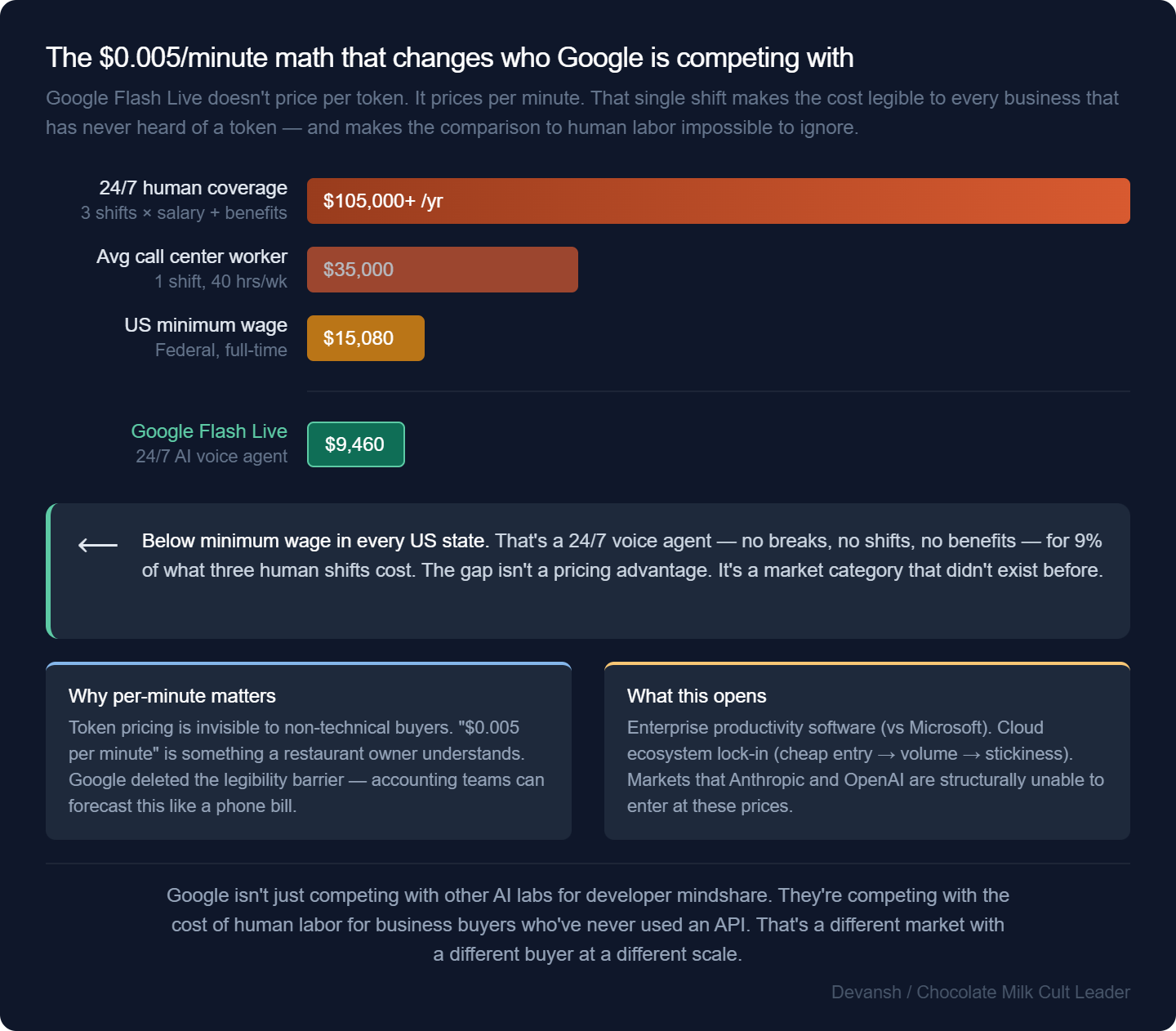

Google dropped voice AI pricing to $0.005 per minute. At that rate, a 24/7 voice agent costs about $25 a day. That’s below minimum wage in every US state. NVIDIA shipped a CPU designed not for training models but for orchestrating tens of thousands of agents running simultaneously. And the Western AI ecosystem quietly locked up over $120 billion in financing — not to build better models, but to brute-force energy contracts, because the real bottleneck isn’t intelligence anymore. It’s the 50-year-old transformer at your local utility that can’t handle the load.

If you zoom out, these aren’t separate stories. They’re the same story. The AI stack is fracturing into distinct economic layers — a commodity inference utility, an industrial infrastructure play, a workflow SaaS layer, and a compliance tollbooth — and each one is starting to behave like the industry it touches rather than the tech industry that built it. Google is fighting a utility price war. NVIDIA is acting like an industrial architect. OpenAI is doing shadow banking. None of this looks like software. Because it isn’t.

That’s what we’re unpacking this month. Specifically, we’ll cover:

How Google is weaponizing dirt-cheap AI to lock markets no one else can touch — and how OpenAI, Microsoft, and NVIDIA are each scrambling to respond with very different bets

Why the real AI bottleneck is power, not compute — the US grid vs. China’s grid, NVIDIA’s pivot to industrial architecture, and the shadow-banking financing play that’s quietly underwriting the entire Western AI buildout

The four economic layers hiding inside every AI company — and why evaluating this industry with standard SaaS metrics is going to get a lot of people burned

Let’s get some bread.

Executive Highlights (TL;DR of the Article)

(read the actual sections for the full data and breakdowns, these are just to give you the overview)

The Price War.

Google dropped Gemini Flash Live to $0.005 per input minute. At $0.018/min output, a 24/7 voice agent costs ~$25/day ($9,460/year), below minimum wage in every US state. The plan is simple: collapse the cost until entirely new markets open that OpenAI and Anthropic can’t reach. Google can sustain this — insane ad profits, vertical integration from silicon to cloud, and nowhere else to deploy that capital at scale.

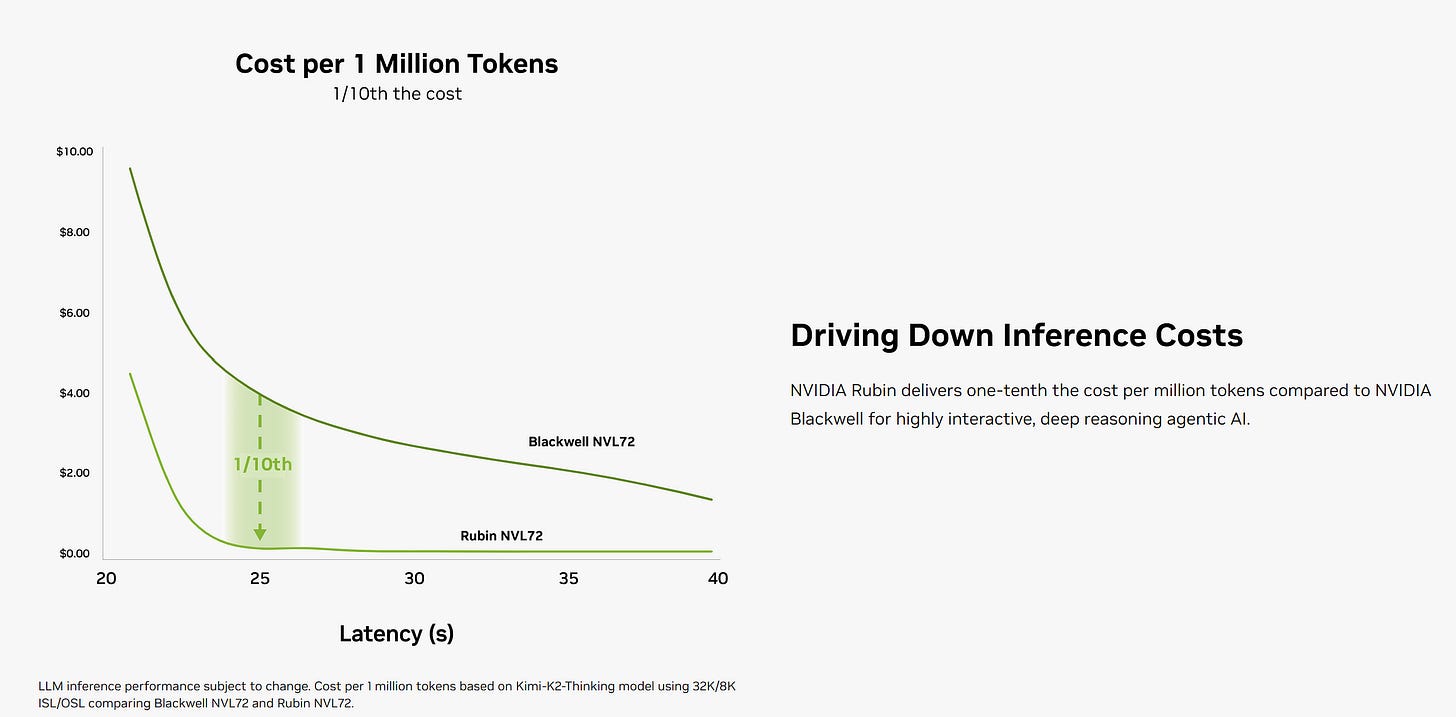

Everyone else is repositioning. OpenAI closed $122B and immediately bought Astral (the company behind uv and Ruff) — betting that owning the best Python sandbox matters more than having the best model. Microsoft is pushing Copilot Cowork into Office 365, routing between OpenAI and Anthropic natively, betting bureaucratic friction keeps enterprises locked in. NVIDIA shipped the Vera CPU for agentic orchestration — 22,500 concurrent environments per liquid-cooled rack — productizing the AI factory itself. Whoever’s frontier model wins, NVIDIA collects the orchestration toll at scale.

The Power Bottleneck.

Dirt-cheap intelligence means nothing without physical infrastructure to run it. The real bottleneck in 2026 isn’t GPUs — it’s the local utility company telling your $500M data center it’ll melt their 50-year-old transformer.

NVIDIA and Emerald AI are designing data centers as dispatchable grid assets — they proved they can curtail facility demand by a third in under a minute. When a heat dome hits, the AI factory throttles to keep the grid stable, which gets them ahead in the permitting arms race.

However, the geopolitical gap is brutal.

The US grid: ~1.37 TW, aging infrastructure, local bureaucracy.

China’s grid: ~3.89 TW, added 500 GW last year alone, state-mandated expansion with no permitting friction.

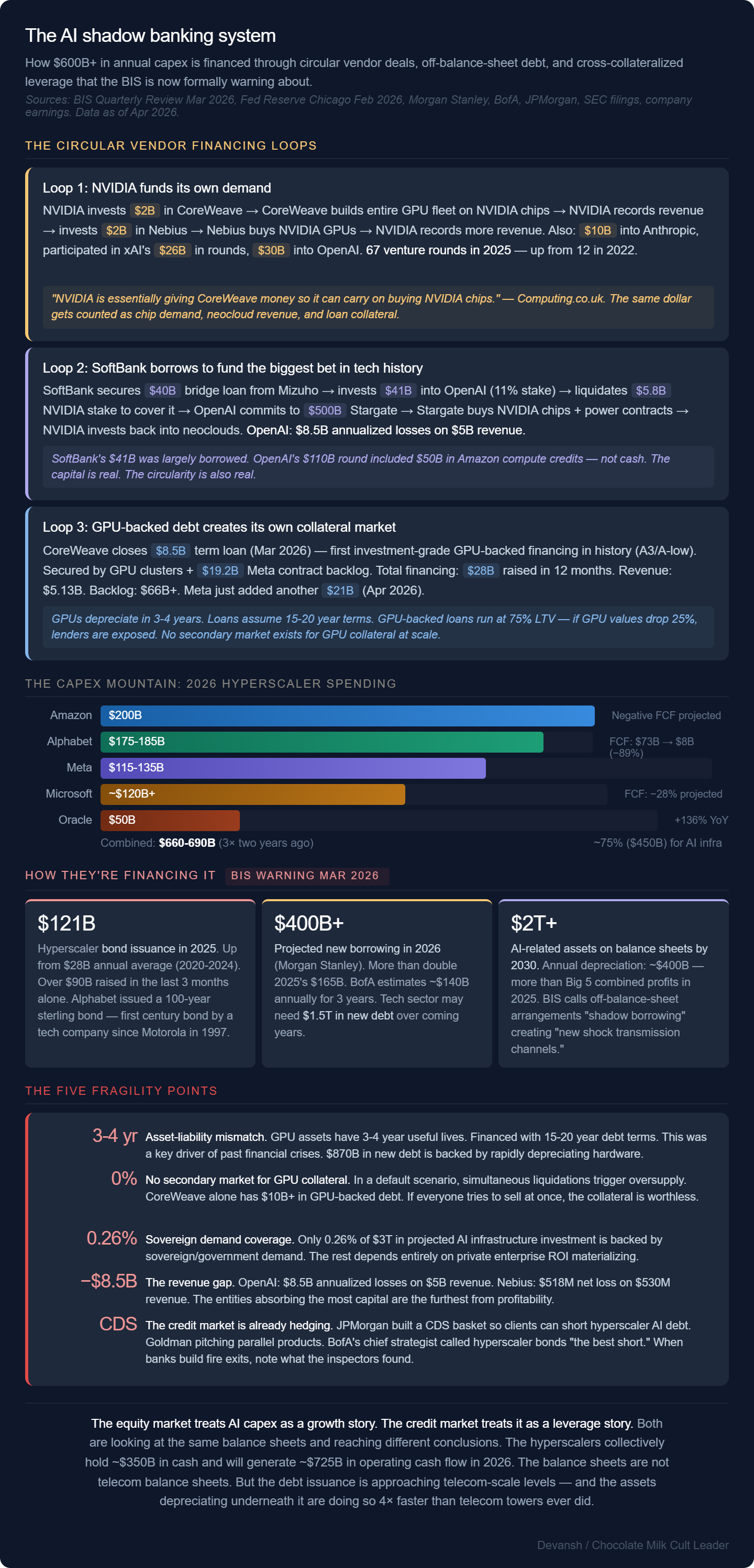

The Western response is shadow banking: NVIDIA pumped $2B into Nebius (targeting 5 GW by 2030), OpenAI locked up $122B, hyperscalers are building their own grids on debt.

When an enterprise signs a major AI contract today, they think they’re buying software. They’re actually buying into a highly leveraged financial cascade. If enterprise ROI on agents takes 24 months instead of 12, the debt servicing cracks and those artificially cheap API prices violently correct.

The Stack Fracture.

AI is no longer one industry. It’s four stacked economic layers, each behaving like the industry it touches:

Inference utility — commodity price wars, capital-intensive, defined by physical deployment limits. This is where Google is fighting.

Hardware infrastructure — industrial project finance, entirely dependent on power contracts, interconnection queues, and local permitting. This is where the shadow banking lives.

Workflow and distribution — traditional SaaS economics, higher margins, sits closest to the customer and owns the business process. Microsoft and Anthropic are fighting here.

Compliance and orchestration — tollbooths for enterprise deployment. Strong economics from resolving the friction of getting AI approved and running, not from intelligence itself.

The market hasn’t recognized this fracture. Analysts still evaluate the entire AI stack using SaaS metrics (ARR, seat expansion) while margin leaks to utilities, hardware financiers, and compliance layers. The correct framing: AI behaves more like the industries it penetrates than the tech industry that spawned it.

I put a lot of work into writing this newsletter. To do so, I rely on you for support. If a few more people choose to become paid subscribers, the Chocolate Milk Cult can continue to provide high-quality and accessible education and opportunities to anyone who needs it. If you think this mission is worth contributing to, please consider a premium subscription. You can do so for less than the cost of a Netflix Subscription (pay what you want here).

I provide various consulting and advisory services. If you‘d like to explore how we can work together, reach out to me through any of my socials over here or reply to this email.

Section 1. Hello Jevons, Old Friend

We spent February mapping out how labs survive commodity intelligence. We broke this into 3 strategies:

Google’s ecosystem funnel.

Anthropic’s capability lock-in

The Chinese labs’ brutal volume-to-efficiency flywheel.

Why are we bringing up old news? Well, March saw Google fully embrace this dynamic by dropping 2 nukes. First came their Gemini 3.1 Flash-Lite at $0.25 per million input tokens. Clearly a play to entrench further into the ecosystem. And then, they followed this up with the actual kill-shot: Gemini Flash Live: $0.005 per minute for input. Not per token. Per minute.

Token pricing for voice models has been a huge blocker for many low-tech enterprises from adopting voice models. Going per-minute deletes that huge blocker. At $0.018 an output minute, a 24/7 voice agent costs ~25 bucks a day ($9,460 per year — below minimum wage in every U.S. state). The cost drop and extreme legibility open the market for the Big G in two ways:

Makes accounting easier.

Is cheap for the poor companies.

Both will be a huge draw for the “AI in the real world” push that is coveted by many companies. Google’s insane profits and relative lack of avenues where it can invest in the short-term mean this is a great place to bet on. They can outlast most in a battle of attrition, and their extreme vertical integration means they can test things at scales unavailable to their biggest competitors.

All these factors point us to one thing: Google is planning to bring AI down to rates no one else can compete at, and use that to lock markets that OAI and Anthropic won’t be able to touch. And given how badly Microsoft has shit the bed on models, they’re likely going to be unable to compete.

Unlocking Jevons Paradox and extreme low-cost AI also opens up two new spaces for them:

They’ve been eyeing the juicy enterprise productivity software space (MSOffice etc). Being able to put more intelligent models will allow them to steal this lead. They beat Microsoft by being better than whatever their solution is. They beat Claude CoWork by coming in much cheaper and integrating into more surfaces (workspace, email, etc). They’re the only ones who can do both.

They can have a major differentiator from the other cloud providers, using their models as a trap to pull people into the Google Cloud ecosystem. They’ve failed at this twice, but they’ve made several important changes to facilitate this and are genuinely doing much better on this front.

Google is playing a very mean game (they’ve been playing it for a bit, but this was them escalating), one they’re uniquely positioned to win. Many other players in the ecosystem have acknowledged this position and reconfigured their strategies accordingly.

OpenAI closed a staggering $122B round to immediately buy Astral, grabbing the underlying Python primitives like uv and Ruff. Coding agents don’t fail at reasoning; they fail at dependency resolution and environment execution. The call is simple: own the best sandbox, and people will forgive a slightly worse model (ironically, Anthropic’s Claude Code is beating Codex precisely for this reason — it’s worse on intelligence but significantly easier to use). They’re also betting on a mini-Jevons Paradox themselves, especially when it comes to outlasting Anthropic (which has been struggling with meeting usage demands).

Microsoft is trying that same “own the interface”play for the enterprise, pushing Copilot Cowork into the background of Office 365. By routing tasks between OpenAI and Anthropic natively, they’re trying to create enough stickiness to ensure bureaucratic friction keeps people with them.

And then there’s NVIDIA. If agents are cheap and legible, volume goes vertical. But agents require heavy state management and ephemeral compute. NVIDIA saw the shift and shipped the Vera CPU explicitly for agentic orchestration. One liquid-cooled rack, 22,500 concurrent environments. They are productizing the AI factory. Run whatever frontier model you want — when you scale to 50,000 background agents, you pay NVIDIA’s toll to orchestrate the hardware.

This is where the next part of our story becomes interesting. We’re spawning software innovations at a violently ferocious rate. But one may sketch out a thousand love stories with their crush, and never get anywhere since they never act on it in the physical world. Similarly, all software innovations are merely theoretical if not deployed properly in the hard, physical world. And we’re really starting to hit limits to how hard we can get, and how long we can maintain our hardness.

Section 2. Your Hardware Needs More Blood-Flow

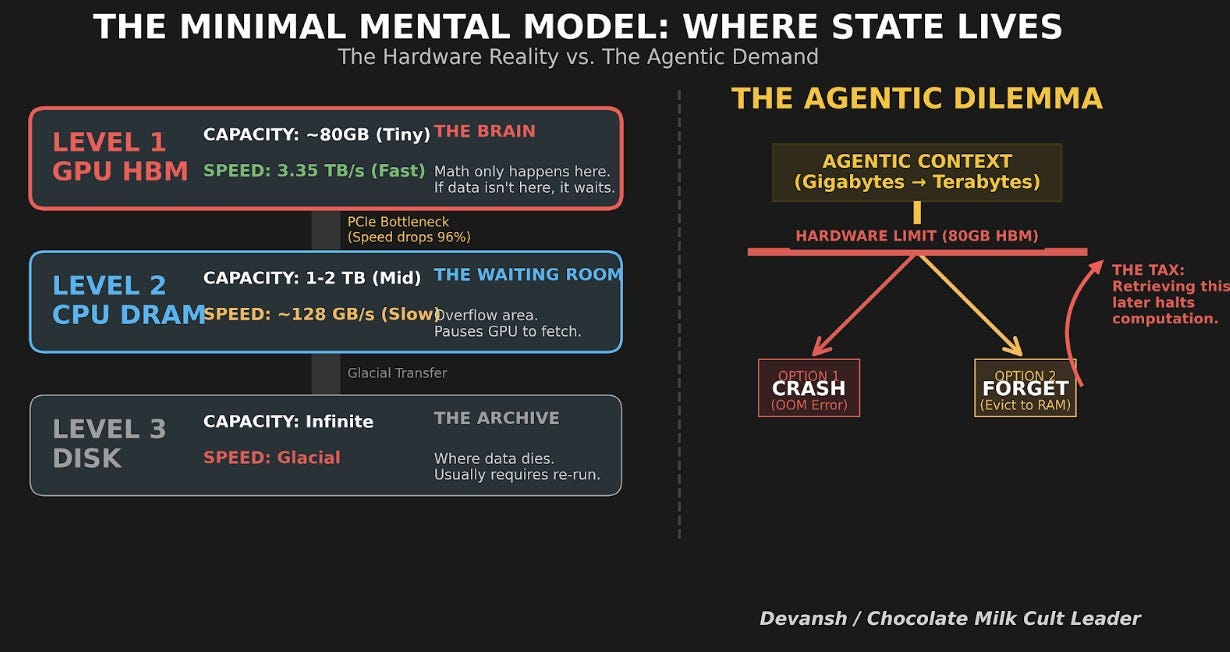

Section 1 gave us dirt-cheap intelligence. You can now spawn a million voice agents for the price of Paddy Pimblet’s striking defense. Beautiful. But a million agents in a pitch deck is easy; a million agents in the real world require racks, cooling, and power contracts. Someone has to babysit the gibbets that never sleep, maintain a constant state (read our deep dive into Weka into why that’s a problem), and call APIs every six seconds.

And you gotta house the monkeys too.

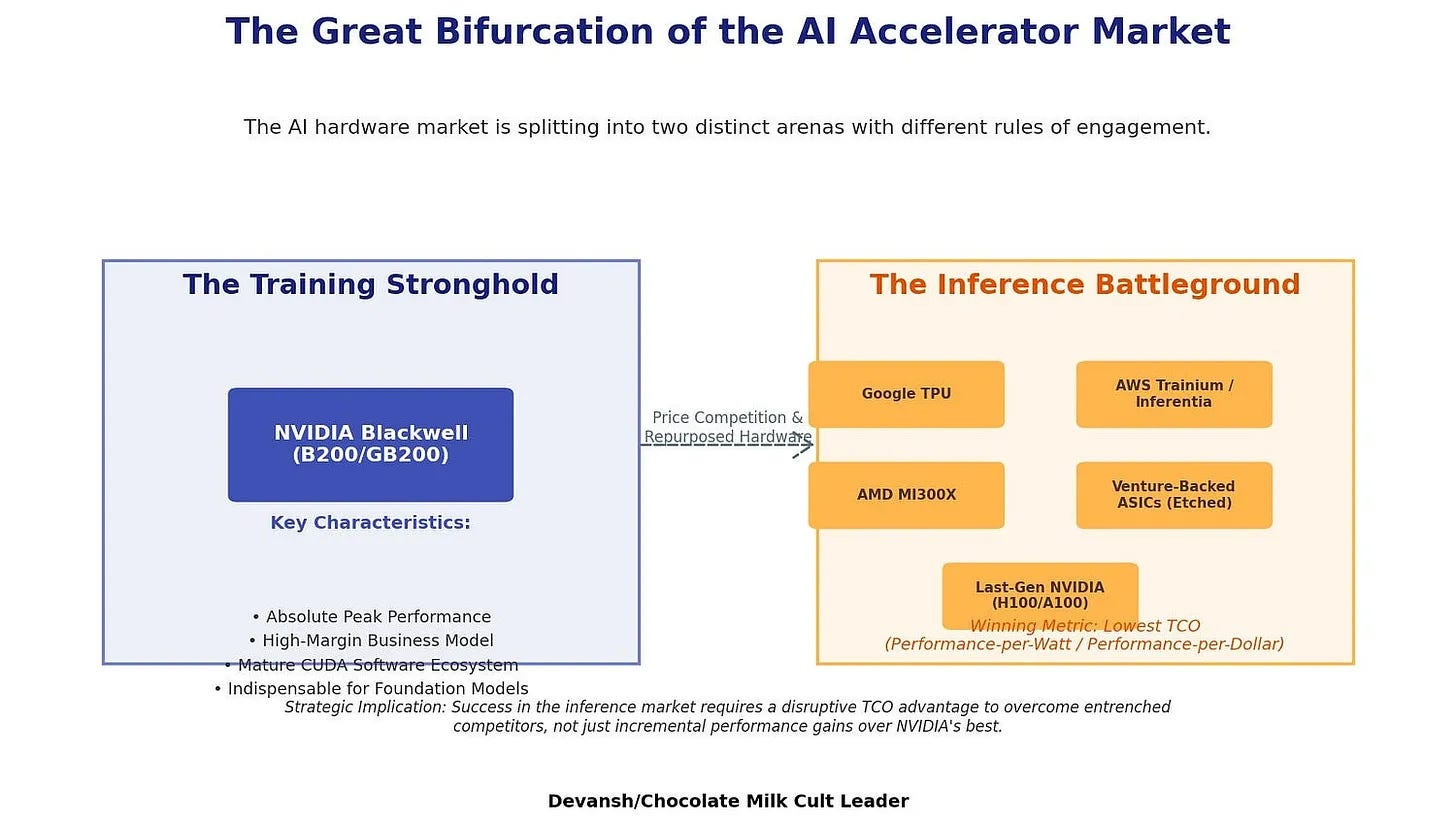

Forget the GPU shortages for a sec. GPUs will become cheap enough when Crypto Timmy sells his mining rigs to pay for his new sports betting business. And since most of these agents will skew towards inference, you can always skew the ASIC boiz a shout and let them get you better inference. So there are options when it comes to compute.

The actual bottleneck in March 2026 is the local utility company looking at Samas proposed $500 million AI factory and telling you it will melt their 50-year-old transformer. If you can’t get permission to plug in, your supercomputer is just a highly advanced, very expensive brick.

NVIDIA knows this, which is why they stopped acting like a chip vendor and started acting like an industrial architect. Look at the Vera CPU and the DSX stack. NVIDIA pre-engineers the entire rack from top to bottom: the GPUs for compute, the Vera CPUs for agent orchestration, the networking switches, the BlueField security units, and the exact liquid-cooling specs. They even provide a digital simulation to test thermal loads before construction begins (this is the same playbook used by Intel for PCs). You get easier deployments; Nvidia keeps you swallowing their load.

But the blueprint doesn’t solve the power grid. That’s where the Emerald AI and NVIDIA Flex integration comes in.

How do you get a utility to approve a massive data center? You prove you can turn it off.

Emerald and NVIDIA are designing AI factories as “dispatchable grid assets.” They proved they could curtail facility demand by a third in under a minute. When a heat dome hits, the utility tells the AI factory to throttle down, and it instantly drops 25% of its load while keeping priority tasks alive. This keeps the grid stable, letting them get ahead in the permissions arms race.

But taking a step back exposes a brutal geopolitical reality.

The US grid sits at roughly 1.37 Terawatts and is choked by aging infrastructure and local bureaucracy. China is sitting on roughly 3.89 Terawatts and added 500 Gigawatts last year alone. China’s AI ecosystem doesn’t have to beg local municipalities for interconnection rights or build software to purposely throttle their GPUs during a hot summer since the CCP just mandates the grid expansion. The CCP also has the physical runway to natively absorb the explosion in agent volume.

So how does the West compete with a state-mandated 4-Terawatt grid?

Shadow banking:

NVIDIA pumped $2 billion into Nebius (explicitly targeting a massive 5 GW of capacity by 2030).

OpenAI locked down $122 billion.

Multiple providers building their own grids (including hyperscalers taking lots of debt)

Mounds of circular financing.

The Western players are cross-collateralizing each other to brute-force energy contracts and buy compute at gigawatt scale.

This fundamentally shifts the risk. When an enterprise signs a massive AI contract today, they think they are buying software. They aren’t. They are buying into a highly leveraged financial cascade. The underlying gigawatt factories are funded by debt. If the enterprise ROI on these agents takes 24 months to materialize instead of 12, the debt servicing on that infrastructure is going to crack, and those artificially cheap API prices will violently correct.

This creates an interesting dynamic where both the AI arms races are concentrated in very few players —

CCP for China. As long as AI remains a priority, they can fight forevers, but a single priority shift can stall the whole thing. It might also hamper the long-term shifts since centrally planned, top-down mass manufacturing is good for efficiency, but not for really anticipating how things change.

The shadow-banking/vendor financing only needs one domino to tumble over.

Should come as no surprise, that the regulators are watching all of this with keen interest. March was a pretty active month for them —

Section 3: Where Do We Go From Here

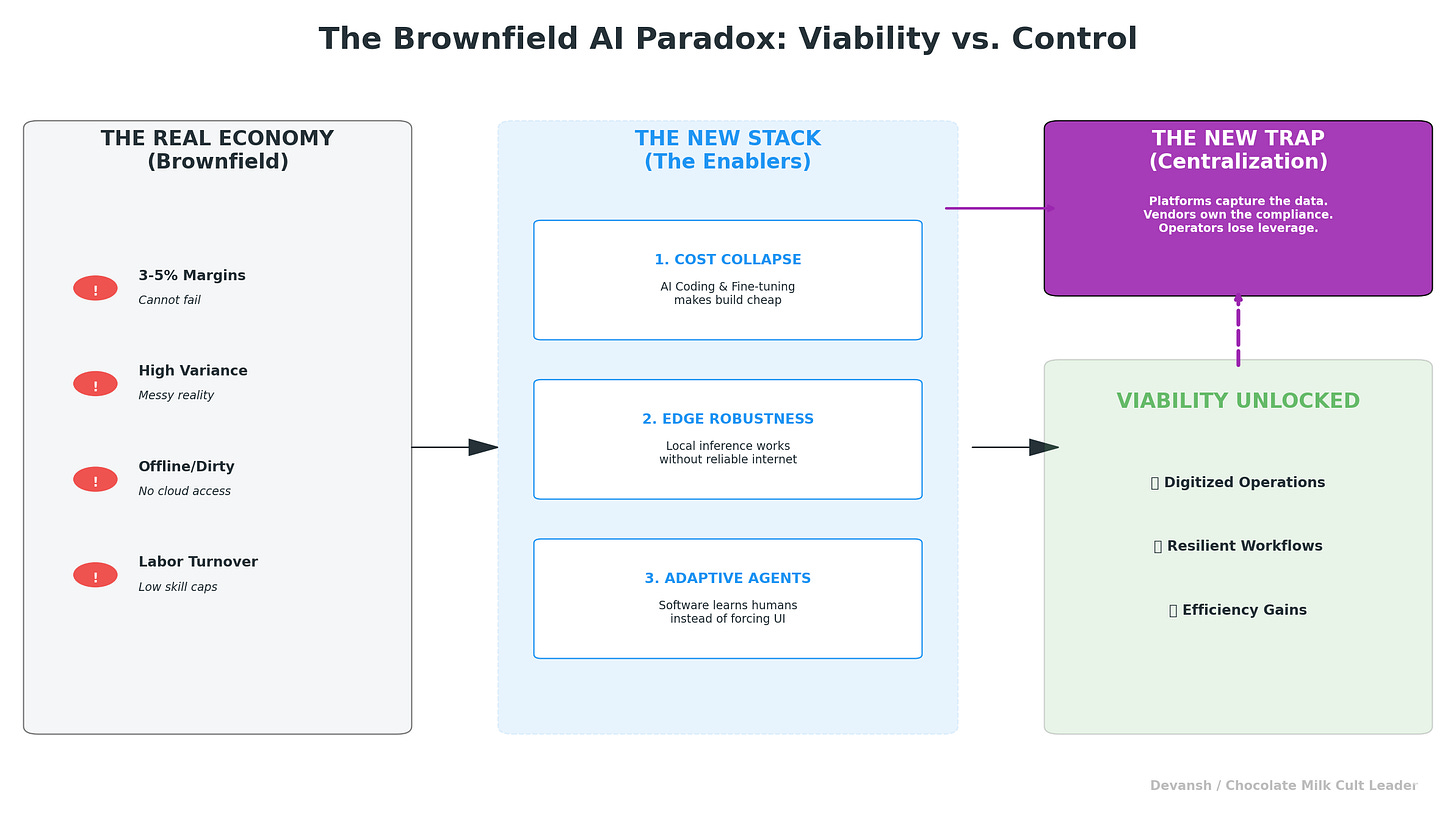

The traditional tech model is simple: build software once, sell it infinitely, and keep the margins high. That works for classic SaaS, where the physical world is mostly background noise.

However, AI is fracturing this clean software model into multiple distinct economic categories.

The baseline model and inference layer are becoming a capital-intensive utility, defined by price wars and physical deployment limits.

At the same time, the underlying hardware layer operates more like industrial project finance, entirely reliant on power contracts, interconnection queues, and local permitting.

Above those utility and infrastructure layers, the economics change again.

The workflow and distribution layer still resembles traditional SaaS, maintaining higher margins because it sits closest to the customer and owns the actual business process.

Beside it sits a new category of compliance, governance, and orchestration tools. These act as necessary tollbooths for enterprise deployment, generating strong economics not from baseline intelligence, but by resolving the friction of getting AI approved and running in the real world.

The market has not fully recognized this stack fracture. Analysts continue to evaluate the entire AI industry using standard software metrics like annual recurring revenue and seat expansion. By doing so, they are ignoring how much margin is now leaking to utilities, hardware financiers, and compliance layers. Much like the technology itself, AI isn’t one industry or category: it’s a clusterfuck of multiple sub-categories each behaving in ways unique to it’s set of constraints. Closer to the industries it plays than the tech industry that spawned it.

The implications for financing, pricing, and what gets built will be very interesting to see. My bet is on the low-tech revolution, which we covered here. Share your takes on how this impacts things go from here below.

Thank you for being here, and I hope you have a wonderful day,

Dev <3

If you liked this article and wish to share it, please refer to the following guidelines.

That is it for this piece. I appreciate your time. As always, if you’re interested in working with me or checking out my other work, my links will be at the end of this email/post. And if you found value in this write-up, I would appreciate you sharing it with more people. It is word-of-mouth referrals like yours that help me grow. The best way to share testimonials is to share articles and tag me in your post so I can see/share it.

Reach out to me

Use the links below to check out my other content, learn more about tutoring, reach out to me about projects, or just to say hi.

Small Snippets about Tech, AI and Machine Learning over here

AI Newsletter- https://artificialintelligencemadesimple.substack.com/

My grandma’s favorite Tech Newsletter- https://codinginterviewsmadesimple.substack.com/

My (imaginary) sister’s favorite MLOps Podcast-

Check out my other articles on Medium. :

https://machine-learning-made-simple.medium.com/

My YouTube: https://www.youtube.com/@ChocolateMilkCultLeader/

Reach out to me on LinkedIn. Let’s connect: https://www.linkedin.com/in/devansh-devansh-516004168/

My Instagram: https://www.instagram.com/iseethings404/

My Twitter: https://twitter.com/Machine01776819

From the perspective of national competition, I agree with Devansh’s central point: AI competition is rapidly moving beyond the old framework of intra-software industry rivalry and increasingly starting to look like a composite contest of state capacity, industrial systems, energy systems, and financial power. The core bottleneck in AI by 2026 is no longer simply whether a country has access to enough GPUs. It is whether it can run massive numbers of intelligent agents cheaply, reliably, compliantly, and at scale. And what truly constrains that expansion is often not the chip itself, but grid access, data center construction, cooling, financing, and the friction of enterprise deployment. In that sense, the most important dividing line in U.S.-China competition is whether a country possesses the conditions necessary to convert laboratory capability into economy-wide deployment.

America’s strengths have long been concentrated in frontier models, top-tier talent, software ecosystems, capital markets, and global platforms. China’s potential advantages, by contrast, are increasingly showing up in stronger power-system expansion, more complete manufacturing supply chains, faster infrastructure buildout, and higher state coordination capacity. The United States is using its superior ability to mobilize capital to offset its infrastructure weaknesses. That is a classic American strength, but also a classic American risk. The U.S. excels at securitizing future expectations, leveraging them, and capitalizing them early, which is why it often moves extraordinarily fast in the early stages of a new industrial wave. But if physical infrastructure and real-world deployment fail to keep pace, then financial front-loading can transform what should have been a medium- to long-term industrial construction challenge into an asset-liability problem much earlier than expected.

At the same time, the United States should not be underestimated. America’s real advantage is not simply that it can raise money. It is that it can connect research, entrepreneurship, venture capital, public-market exits, global customers, platform ecosystems, and legal institutions into a highly efficient innovation amplifier. Even with aging local grids and fragmented permitting, the United States may still gradually rebuild parts of a new AI infrastructure system through market-based power purchase agreements, privately developed energy assets, hyperscaler vertical integration, and regulatory adaptation. Historically, the U.S. has repeatedly demonstrated a remarkable ability to generate new financing tools, new infrastructure models, and new industrial alliances under pressure. In many cases, it is precisely through bubbles, overfinancing, and seemingly wasteful competition that America ends up selecting the true infrastructure winners of the next cycle. Its system is often messy, expensive, and inefficient, yet it is also capable of producing new forms of industrial dominance out of that very chaos.

In my view, AI industry competition should really be understood as the combination of seven interlocking layers: energy, chips, cloud, models, open-source ecosystems, enterprise software entry points, and real-world deployment scenarios. China is stronger in energy, infrastructure speed, manufacturing depth, and some application environments. The United States remains stronger in advanced chips, the global software ecosystem, cloud platforms, capital markets, and original technology networks. What will ultimately determine the outcome is not which side is stronger at any single node, but which side can combine these capabilities into a sustainable closed loop.

That is why the future is more likely to produce layered leadership than unilateral dominance. Once AI becomes sufficiently cheap and sufficiently widespread, the decisive question will no longer be who built the smartest model in the lab. It will be who has the stronger industrial organizational capacity to absorb, deploy, power, finance, regulate, and embed intelligence into the broader economy.